Last year, I helped a B2B SaaS company discover that 40% of the conversions their paid search campaigns were “driving” would have happened anyway. Their attribution dashboard said paid search was their top channel. Incrementality testing told a very different story.

This is the gap most marketing teams never close. They optimize dashboards instead of outcomes. They celebrate correlation while ignoring causation. And they waste budget on channels that look productive but aren’t actually moving the needle.

Incrementality testing fixes this. It’s the closest thing marketers have to a scientific method for answering the question that actually matters: did this marketing activity cause additional conversions that wouldn’t have happened otherwise?

What Is Incrementality Testing?

Incrementality testing measures the true causal impact of a marketing campaign by comparing outcomes between a group exposed to your marketing and a control group that isn’t. It borrows directly from clinical trial methodology: you need a treatment group and a control group to isolate the variable you’re testing.

The “incremental” conversions are those that happened because of your marketing — not just after it. That distinction is everything.

Here’s the core formula:

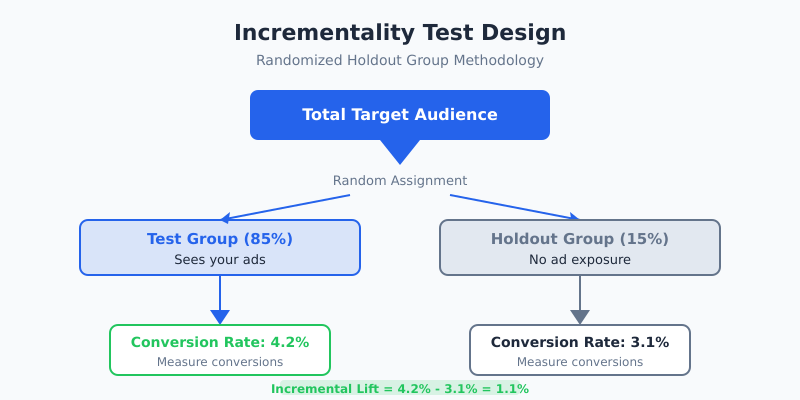

Incremental Lift = (Test Group Conversion Rate – Control Group Conversion Rate) / Control Group Conversion Rate

If your test group converts at 4.2% and your holdout converts at 3.1%, you have a 35.5% incremental lift. That 3.1% baseline? Those people would have converted regardless. Only the difference — the 1.1 percentage points — represents conversions your marketing actually caused.

Why Last-Click and Multi-Touch Attribution Fall Short

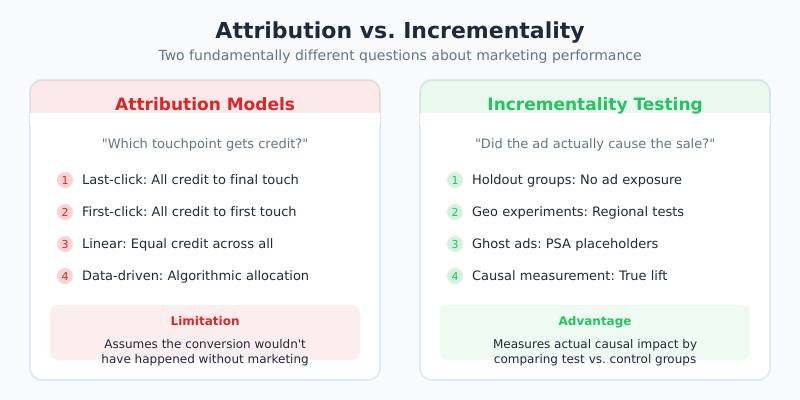

I’ve worked with attribution models for over a decade. They’re useful for understanding the customer journey, but they have a fundamental flaw: they assume the conversion wouldn’t have happened without marketing.

Last-click attribution gives 100% credit to the final touchpoint. If someone was already going to buy your product and happened to click a branded search ad first, that ad gets full credit for a sale it didn’t influence.

Multi-touch attribution (MTA) distributes credit across touchpoints, which sounds better. But it still operates on the same flawed assumption. As Google’s own research on causal inference in advertising has shown, observational data alone cannot establish causation, no matter how sophisticated your attribution algorithm.

Common attribution blind spots include:

- Brand cannibalization: Paid search ads capturing organic clicks that would have happened for free

- Retargeting inflation: Showing ads to people already in your checkout flow

- Cross-device gaps: Missing the full journey when users switch devices

- Cookie deprecation: Losing visibility as tracking becomes harder

- Correlation bias: Heavy spenders see more ads AND buy more, but the ads aren’t the cause

As Rand Fishkin, founder of SparkToro, has noted: “Attribution models tell you what touched the customer. Incrementality tells you what moved the customer. Those are very different things.”

How Incrementality Tests Work: Four Approaches

There isn’t one single way to run incrementality tests. The right approach depends on your channel, budget, and technical capabilities. Here are the four most common methods I’ve seen work in practice.

1. Holdout Group Tests (Intent-to-Treat)

The most straightforward approach. You randomly split your audience: one segment sees your ads, the other doesn’t. After a set period, you compare conversion rates between the two groups.

This is the gold standard for digital channels where you have audience-level targeting control. Facebook, Google, and most DSPs support this natively. The key is true randomization — if your groups differ in any systematic way, your results are worthless.

2. Geo Experiments

When you can’t randomize at the user level, you randomize at the geographic level. You select matched pairs of markets (similar size, demographics, and historical performance), run your campaign in some markets, and hold others out.

Google’s open-source GeoexperimentsResearch framework was built specifically for this. Geo tests work well for measuring the incrementality of TV, radio, out-of-home, and any channel where user-level holdouts aren’t possible.

The downside: you need enough markets to achieve statistical power, and local factors (weather, events, competitors) can add noise.

3. Ghost Ads (Predicted Ad Exposure)

Ghost ads are clever. Instead of withholding ads entirely from the control group, you identify users who would have seen your ad based on the auction dynamics, but show them a public service announcement (PSA) or blank creative instead.

This approach, pioneered in academic research and adopted by Meta, solves a major problem with simple holdout tests: it ensures your test and control groups have the same baseline intent to browse content where ads appear.

4. PSA (Charity) Tests

Similar to ghost ads, but simpler. Your control group sees a public service announcement or charity ad instead of your ad. You pay for the impression either way, so it’s a clean comparison of your creative’s impact versus a neutral exposure.

Facebook’s Conversion Lift studies historically used this methodology. It’s especially useful for brand advertising where you’re trying to measure awareness or consideration lift rather than direct-response conversions.

Step-by-Step Guide to Running Your First Incrementality Test

If you’ve never run an incrementality test, start simple. Here’s the process I recommend to teams who are just getting started.

Step 1: Choose Your Highest-Spend Channel

Start where the stakes are highest. If you’re spending $50K/month on paid search, that’s where even a small incrementality insight can save (or reallocate) significant budget. Don’t start with a channel where you spend $2K/month — the numbers won’t be meaningful.

Step 2: Define Your Success Metric

Pick one primary metric before you start. Conversions, revenue, sign-ups — whatever matters most. Resist the temptation to measure everything. You need statistical power, and splitting your attention across metrics dilutes it.

Step 3: Set Your Holdout Size

A 10-15% holdout is typical. Yes, you’re “sacrificing” some potential conversions in the holdout group. But the insight you gain will more than compensate. For most campaigns, I recommend 85/15 (test/holdout) as a starting point.

Step 4: Calculate Required Sample Size

This is where most teams skip ahead and regret it later. You need enough volume to detect a statistically significant difference. The formula depends on your baseline conversion rate, the minimum lift you want to detect, and your desired confidence level (usually 95%).

A rough guideline: if your baseline conversion rate is 3% and you want to detect a 20% relative lift (to 3.6%), you’ll need approximately 30,000 users per group. Lower baseline rates or smaller expected lifts require larger samples.

Step 5: Run the Test for 2-4 Weeks

Don’t peek early. Seriously. Checking results daily and stopping when things “look significant” is a recipe for false positives. Set your test duration in advance based on your sample size calculation and stick to it.

Account for day-of-week effects by running in full-week increments. Two weeks is the minimum for most campaigns; four weeks is better for longer purchase cycles.

Step 6: Analyze Results

Compare your test and control groups on your primary metric. Calculate the incremental lift and check for statistical significance (p-value below 0.05). If you’re not statistically significant, that’s a result too — it means your marketing’s incremental impact is either very small or your test needs more volume.

Statistical Significance and Sample Sizes: The Math That Matters

I won’t sugarcoat this: incrementality testing requires enough volume to be meaningful. The two enemies of valid incrementality tests are insufficient sample size and premature analysis.

Key statistical concepts:

- Confidence level (typically 95%): How sure you need to be that the observed difference is real

- Statistical power (typically 80%): The probability of detecting a real effect when one exists

- Minimum detectable effect (MDE): The smallest lift you want to be able to identify

- Baseline conversion rate: Your current conversion rate without the test variable

The relationship between these is inversely proportional. Want to detect smaller effects? You need more data. Want higher confidence? More data. This is why incrementality testing tends to work best for higher-spend campaigns — they generate the volume needed for reliable conclusions.

As Avinash Kaushik, former Digital Marketing Evangelist at Google, has stated: “The biggest mistake in incrementality testing isn’t running the test wrong — it’s not running it at all because you’re afraid of the answer.”

Real-World Examples

Paid Search Brand Keywords

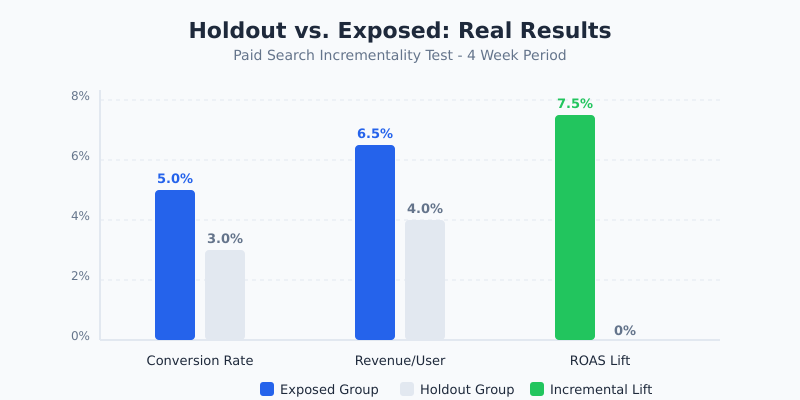

This is the classic incrementality test, and the results almost always surprise people. A major ecommerce brand I advised ran a holdout test on branded search terms and found that 65% of their branded paid clicks would have come through organic results anyway. They were essentially paying for traffic they already owned.

They didn’t eliminate branded search entirely — it still had defensive value against competitors — but they reduced branded spend by 50% and reallocated to non-brand terms with higher incremental value. Net result: same revenue, lower cost.

Facebook Prospecting Campaigns

Using Meta’s native Conversion Lift tool, a SaaS company I worked with tested their cold-audience prospecting campaigns. The lift study showed a genuine 28% incremental lift in free trial sign-ups — validating that their prospecting spend was reaching people who genuinely wouldn’t have found the product otherwise.

Interestingly, their retargeting campaigns showed only a 6% incremental lift. Most of those “retargeted” conversions would have happened naturally through return visits. They shifted budget from retargeting to prospecting and saw overall CAC decrease by 18%.

Display Advertising

Display is where incrementality testing delivers the most humbling results. In my experience, programmatic display typically shows incremental lifts of 0.5-2% — far lower than what last-click dashboards suggest. This doesn’t mean display is worthless, but it means the true cost per incremental conversion is much higher than reported.

Tools That Support Incrementality Testing

You don’t necessarily need specialized tools to run incrementality tests, but they make the process significantly easier.

- Meta Conversion Lift: Built into Facebook Ads Manager for holdout-based lift studies on Meta platforms. Free to use with sufficient spend.

- Google’s Geo Experiments (open-source): R package for designing and analyzing geo-based incrementality tests. Free and well-documented.

- Measured: A dedicated incrementality measurement platform that works across channels. Best for teams spending $500K+ per month.

- Haus: Focuses on geo-based experimentation with a user-friendly interface. Good for mid-market brands.

- Google Ads Conversion Lift: Available for larger Google Ads accounts, measures incrementality of Google campaigns specifically.

- CausalImpact (R package): Google’s open-source Bayesian structural time-series tool for estimating causal impact from time-series data. Free.

- Custom solutions: Many sophisticated teams build their own using Python (scipy, statsmodels) or R. This gives maximum flexibility but requires statistical expertise.

When NOT to Bother with Incrementality Testing

Incrementality testing isn’t always worth the effort. Here’s when I tell teams to skip it:

- Low spend channels (<$5K/month): You won’t generate enough volume for statistical significance. Focus your energy on channels where the budget impact is meaningful.

- Brand-new campaigns: Let campaigns optimize and stabilize for at least 4-6 weeks before testing incrementality. Testing too early produces unreliable results.

- One-time promotions: If it’s a flash sale or seasonal push, there isn’t time for proper test design. Use pre/post analysis instead.

- When you can’t act on results: If political or organizational constraints mean you can’t reallocate budget regardless of what the test shows, don’t waste the effort.

- Channels with no holdout capability: Some channels (like podcast sponsorships or billboards) don’t allow clean holdout groups. Geo experiments can work, but they require multiple markets and longer time frames.

My Experience: What Ten Years of Incrementality Testing Has Taught Me

I’ve run or supervised incrementality tests across dozens of SaaS and ecommerce brands. Here are the patterns that keep showing up:

Branded search is almost always over-credited. In every single branded search incrementality test I’ve been involved with, the true incremental value was 30-70% lower than what attribution models reported. This is the single biggest budget optimization opportunity for most companies.

Prospecting channels are often under-credited. Top-of-funnel channels like content marketing, social prospecting, and podcast advertising consistently show higher incremental value than last-click gives them credit for. They introduce people to your brand who genuinely wouldn’t have found you otherwise.

Retargeting is the most over-hyped channel. Sorry, retargeting vendors. In my tests, retargeting’s incremental lift typically ranges from 2-10%. Those “900% ROAS” dashboards are measuring correlation, not causation.

The first test is always the hardest. Getting organizational buy-in to “turn off ads for some people” is a tough sell. Frame it as risk management: would you rather unknowingly waste 40% of your budget forever, or sacrifice 15% of impressions for two weeks to find out the truth?

Frequently Asked Questions

How long should an incrementality test run?

Most incrementality tests should run for 2-4 weeks minimum. The exact duration depends on your traffic volume, baseline conversion rate, and the minimum lift you want to detect. For longer sales cycles (B2B SaaS with 30+ day cycles), extend to 4-6 weeks. Always run in full-week increments to account for day-of-week patterns, and never stop a test early just because early results look promising or disappointing.

What’s the difference between A/B testing and incrementality testing?

A/B testing compares two versions of something (landing pages, ad creatives, email subject lines) to see which performs better. Incrementality testing compares showing marketing versus not showing marketing to measure whether the marketing itself drives additional conversions. A/B testing optimizes execution; incrementality testing validates the channel’s fundamental value. You need both, but they answer different questions.

Can small businesses run incrementality tests?

It’s challenging but not impossible. The main barrier is volume — you need enough conversions in both test and control groups to reach statistical significance. If you’re getting fewer than 500 conversions per month on a channel, a holdout test likely won’t produce reliable results. Geo experiments can work for local businesses with multiple locations. For small businesses, I recommend starting with simple on/off tests: pause a channel entirely for 2-4 weeks and measure the impact on overall revenue.

How much budget should I allocate to the holdout group?

A holdout of 10-15% of your audience is standard practice. This gives you enough statistical power in the control group while minimizing the revenue you forgo by not showing ads to that segment. For very high-spend campaigns ($1M+/month), you can go as low as 5%. For lower-spend campaigns, 15-20% ensures adequate sample sizes. Remember: the “cost” of the holdout is temporary, but the budget optimization insights are permanent.

What should I do if my incrementality test shows zero lift?

A zero-lift result doesn’t necessarily mean the channel is worthless — it means the channel isn’t driving incremental conversions above what would happen organically. First, verify your test design (proper randomization, sufficient sample size, adequate duration). If the test was well-designed, consider reducing spend on that channel by 25-50% and re-testing. Sometimes reducing spend still produces the same conversions at lower cost. If results consistently show no lift, reallocate that budget to channels with proven incremental value.